This is the second time I have seen Naveen Rao.

As with the first sight, when I talked about AI, his words were opened, and there were always endless ideas and theories, full of economics, and talk.

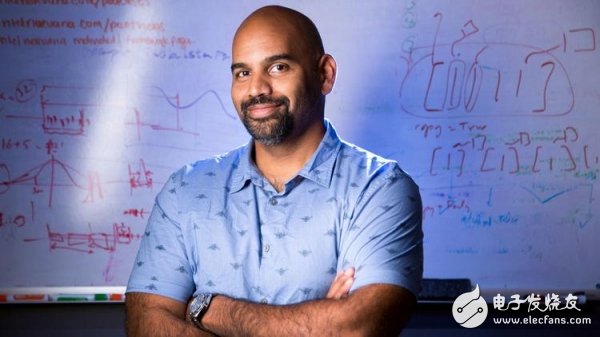

Naveen Rao, Intel Global Vice President and General Manager, Artificial Intelligence Products Division (AIPG)

It is his love for extreme sports that forms a "reverse difference" with his warm professor-like temperament. The 40-year-old AI expert is still a sportsman, so that he injured all his fingers during his skiing, skateboarding, cycling, racing, wrestling and basketball games. Perhaps it is such an adventurer that is more suitable for driving artificial intelligence. After his founding deep learning startup Nervana was acquired by Intel, Nervana was quickly integrated into Intel's core AI strategy, and now Rao has become Intel's artificial intelligence business group ( The helm of AIPG).

Rao said that he came to Intel, "This is an open culture." He likes teamwork very much. However, calling resources is not an easy task, but Intel has rich experience in marketization of products, and strong centripetal force is on the way. The company's various departments have become more and more powerful, working together toward a goal.

At Intel, hard work is always more important than empty talk. At Intel's first AI Developers Conference, led by Rao, the "ç‹ role" of Intel's artificial intelligence business related departments appeared in a concentrated manner, which should be a precedent in the history of Intel AI. Be aware that in addition to Intel's internal meetings, it is possible to see a group of "big cows" in such a small concentration in public, almost a zero probability event.

But Intel did not disappoint.

This time it came up with a super-configured Intel "AI Galaxy Team" (let's call it this name), as shown below, from left to right:

Jennifer Huffstetler, Vice President, Data Center Business Unit and General Manager, Data Center Product and Storage Marketing, Intel

Reynette Au, Vice President, Intel Programmable Solutions Group

Jack Weast, Senior Principal Engineer and Chief Architect, Intel Driverless Solutions

Gayle Sheppard, Vice President, Intel New Technology Group and General Manager, Saffron Artificial Intelligence Division

Remi El-Ouazzane, Vice President, New Technologies Division, and General Manager, Moviduis

Jonathon Ballon, Vice President, Intel IoT Business Unit

Naveen Rao, Vice President, Intel Corporation and General Manager, Artificial Intelligence Products Division

Although this lineup is comparable to Marvel's "Avengers", there is still a "big god" outside the frame.

Vice President of Intel Artificial Intelligence, Corey Kloss, a core member of the Nervana team

Carey Kloss is the vice president of Intel's artificial intelligence business group and a core member of the Nervana team. Although he did not appear in the above picture, he expressed his love for the team to the technology practitioners. "Intel has the best after I have seen so far. Post-silicon bring-up and architecture analysis. For this reason, the Nervana Neural Network Processor (NNP) has been greatly improved.

In fact, NNP is also Intel's long-awaited "killer." At this AI developer conference, Rao unveiled Intel's new generation AI core, the Intel Nervana NNP-L1000, code-named "Spring Crest" dedicated artificial intelligence chip, and this chip is about to become Intel's first Commercial neural network processor products are planned to be released in 2019.

Although Rao didn't reveal the details of the new generation of AI chips, Carey Kloss, who is also the founding team of Nervana, holds the secrets - we certainly won't let him go. During the AI ​​Developers Conference, the technology practitioners had a “grounded gas†dialogue with him. Intel, who used the “Ruyiful Calculationâ€, can play this way.

Nervana NNP: The performance of the new AI core soared 3-4 times, but the power has not been fully released

In Rao's one-hour keynote speech, the most important release of the non-Intel Nervana neural network processor is none other than Intel's.

If you use the "Lake Crest" (Nervana NNP series initial chip code) first published in October last year to make a metaphor, it can be said that "Lake Crest" is like a "timely rain", successfully helping Intel stand in the AI ​​special chip competition. But Intel has put forward a bigger goal, which is to increase the performance of deep learning training by 100 times by 2020. The Crest family is likely to be the fastest way to achieve Intel's goals.

To know that the creation of a chip is not an easy task, if there is no crazy, dedicated chip development team behind it, it will also be a chip that is not enough. So the problem with the insider who knows how to be more focused is: How does the Intel IC design team behind the Nervana neural network processor series chip create a "Spring Crest" that can triple the existing performance by 3-4 times? ?

Although Carey Kloss has a tight tone, with regard to the Nervana neural network processor, the tech savvy is still chatting with him and getting the following sharp information:

1, the main difference between Lake Crest and Spring Crest

As the first generation processor, Lake Crest achieves very good computational utilization on both GEMM (matrix operations) and convolutional nerves. This is not just about 96% throughput utilization, but in the absence of sufficient customization, Nervana also achieves GEMM higher than 80% computational utilization in most cases. When developing the next generation of chips, if you can maintain high computing utilization, the new product has a performance improvement of 3 to 4 times.

2, Lake Crest calculation utilization rate reached 96%, why not fall to Spring Crest?

This is a market strategy that appropriately reduces utilization. In some cases, 98% can be achieved. In the absence of resource conflicts, each silicon chip can be fully operational, achieving 99% or even 100% computational utilization. But Intel wants to show the utilization that can be achieved in most cases, so it is properly adjusted.

3. Why is the release rhythm of the Nervana chip repeatedly delayed?

Divided into two phases, Nervana started researching Lake Crest at the beginning of its inception in 2014. At the time, the team was about 45 people and was building the largest Die (silicon chip). We developed Neon (deep learning software) and built it. The cloud stack, these are all done by small teams. But this is also the challenge. The small team will have a lot of pain to grow. It took a long time for Nervana to take out the first batch of products until the chip was finally released last year. Why Spring Crest chose to launch at the end of 2019, because it needs to integrate more Die (silicon chip), get faster processing speed, but it takes a certain time to manufacture silicon wafers, and also needs silicon wafer to become a new neural network processing. This is the reason for the delay. At the moment, Spring Crest is in a reasonable rhythm and has all the elements to succeed next year.

4. What are the adverse effects of delays on Intel?

Carey Kloss does not believe that Intel will be at a disadvantage on neural network processors, because Intel's response speed is relatively fast, such as the gradual shift to bfloat is an important factor, it is a widely used numerical data format for the neural network in the industry. It is very popular in the market. In the future, Intel will expand support for bfloat16 in the artificial intelligence product line, including Xeon processors and FPGAs.

5, take nGraph compared with CUDA: not afraid

Aside from the hardware level, Intel is also adding momentum to software deployment. Currently, the Intel AIPG Division is developing software called nGraph, a framework-neutral Deep Neural Network (DNN) model compiler. Intel is integrating deep learning frameworks such as TensorFlow, MXNet, Paddle Paddle, CNTK and ONNX on top of nGraph.

The same is a platform concept, many people like to use the GPU to represent the company NVIDIA and Intel. In fact, Carey Kloss bluntly distinguishes nGraph from the competitor CUDA platform.

"nGraph is not the same as CUDA. CUDA can be understood as the underside of nGraph, which we call a transformer. nGraph receives input from TensorFlow, Caffe or MXNet through a fixed API and then optimizes performance through the graphics compiler. Something you don't need, then send it to the CPU's MKL-DNN. So the CPU still uses MKL-DNN, even in nGraph. "It's not hard to see that Intel is also interested in putting chip development on a unified platform. nGraph is built to be the interface for developing AI applications based on all Intel chips.

Compared to the new generation of Nervana NNP-L1000 is still in the research and development stage, Intel's other chip VPU focusing on computer vision has actually been commercialized. Regarding this chip, Intel has placed on what kind of market expectations, to see the answer of another big god who is also outside the frame.

Wireless Bluetooth Keyboard,Best Wireless Gaming Keyboard,Bluetooth Keyboard,Keyboard Bluetooth Dual-Mode Connection

Guangzhou Lufeng Electronic Technology Co. , Ltd. , https://www.lufengelectronics.com